Everybody is convening in San Francisco next week for RSA2010 it appears — the big annual cocktail & business card exchange event. If you are interested in any of our technology (automated malware classification, automated signature generation, BinDiff, BinNavi) and would like to meet up with me, please contact info@zynamics.com 🙂

Archive for February, 2010

VxClass, automated signature generation, RSA 2010

Wednesday, February 24th, 2010Reverse Engineering & Bug Hunting Trainings Class @ CSW

Sunday, February 21st, 2010Hey all,

Sebastian and me will be teaching a bug hunting / reverse engineering trainings class at CanSecWest. With the increased sophistication of both exploitation mitigations and simple static checkers, aquiring an understanding of a piece of software when performing vulnerability research is getting more and more important. In our class, we’ll teach techniques that help you become faster and more productive when reverse engineering. Concrete examples will be worked through for every abstract concept.

Things that we’ll do in this class:

- Generate full UML class diagrams from Acrobat Reader (UML generation from RTTI information)

- Understand Acrobat Readers Javascript implementation – by porting information from Spidermonkey (Symbol Porting)

- Isolating complicated parsing code through differential debugging

- Use lots of Python to extend both IDA and BinNavi – use REIL to perform common tasks

- Read security updates

You can sign up for the class here.

Automating AV signature generation

Wednesday, February 17th, 2010Hey all,

I finally get around to writing about our automated byte signature generator. It’s going to be a bird’s eye view, so if you’re interested you’ll have to read Christian’s thesis (in German) or wait for our academic paper (in English) to be accepted somewhere.

First, some background: One of the things we’re always working on at zynamics is VxClass, our automated malware classification system. The underlying core that drives VxClass is the BinDiff 3 engine (about which I have written elsewhere). An important insight about BinDiff’s algorithms is the following:

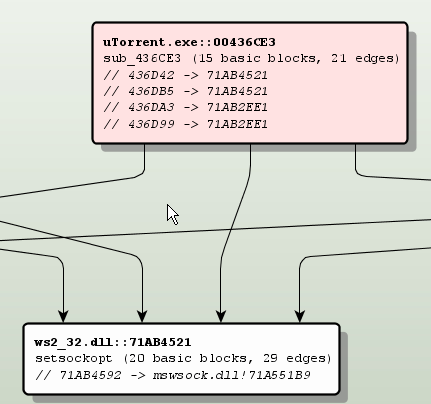

BinDiff works like an intersection operator between executables.

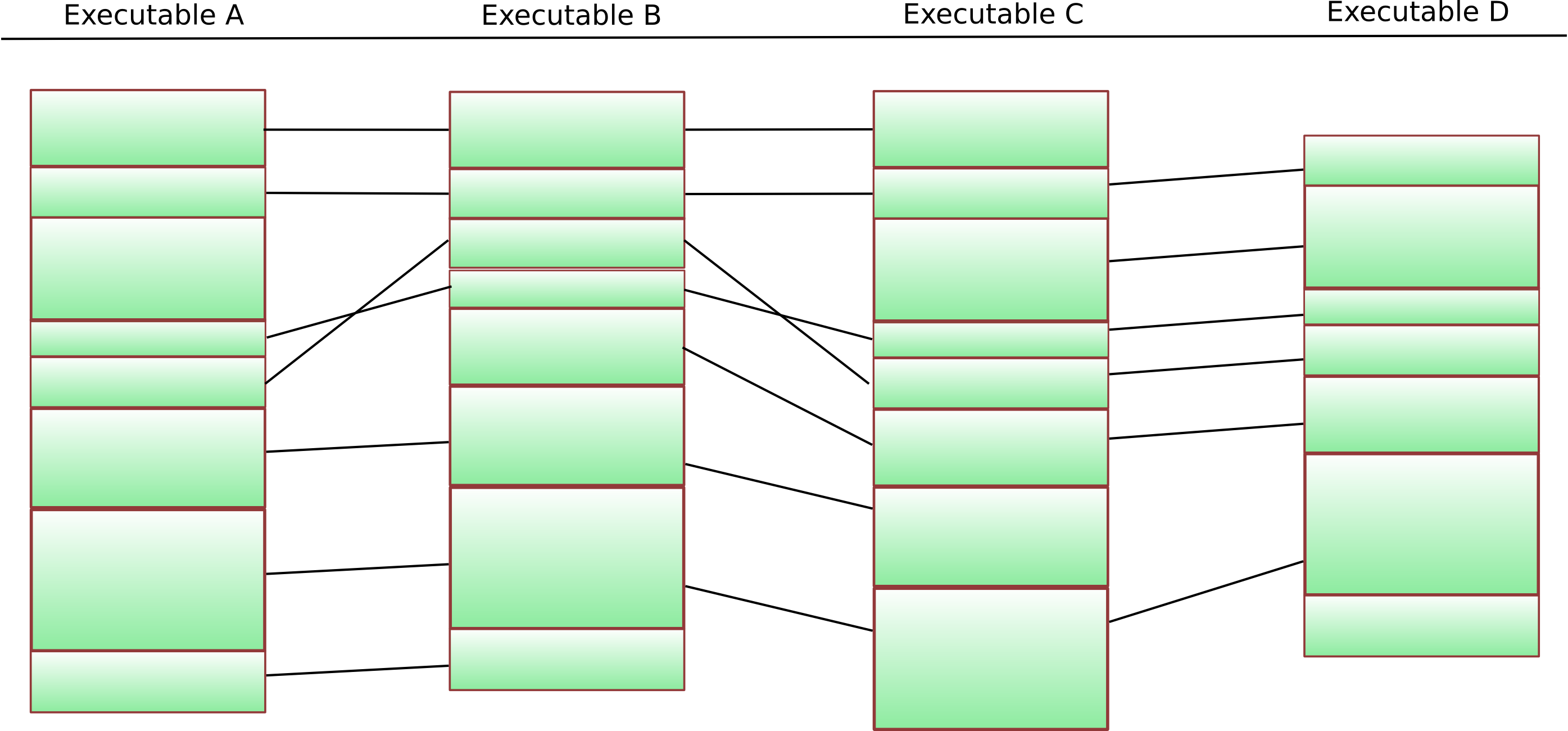

This is easily visualized as a Venn diagram: Running BinDiff on two executables identifies functions that are common to both executables and provides a mapping that makes it easy to find the corresponding function in executable B given any function in A.

Two executables and the common code

This intersection operator also forms the basis of the similarity score that VxClass calculates when classifying executables. This means that the malware families that are identified using VxClass share code. (Footnote: It might seem obvious that malware families should share code, but there is a lot of confusion around the term “malware family”, and before any confusion arises, it’s better to be explicit)

So when we identify a number of executables to be part of a cluster, what we mean is that pairwise, code is shared — e.g. for each executable in the cluster, there is another executable in the cluster with which it shares a sizeable proportion of the code. Furthermore, the BinDiff algorithms provide us with a way of calculating the intersection of two executables. This means that we can also calculate the intersection of all executables in the cluster, and thus identify the stable core that is present in all samples of a malware family.

What do we want from a “traditional” antivirus signature ? We would like it to match on all known samples of a family, and we would like it to not match on anything else. The signature should be easy to scan for — ideally just a bunch of bytes with wildcards.

The bytes in the stable core form great candidates for the byte signature. The strategy to extract byte sequences then goes like this:

- Extract all functions in the stable core that occur in the same order in all executables in question

- From this, extract all basic blocks in the stable core that occur in the same order in all executables in question

- From this, extract (per basic block) the sequences of bytes that occur in the same order in all executables in question

- If any gaps occur, fill them with wildcards

Sounds easy, eh ? 🙂 Let’s understand the first step in the process better by looking at a diagram:

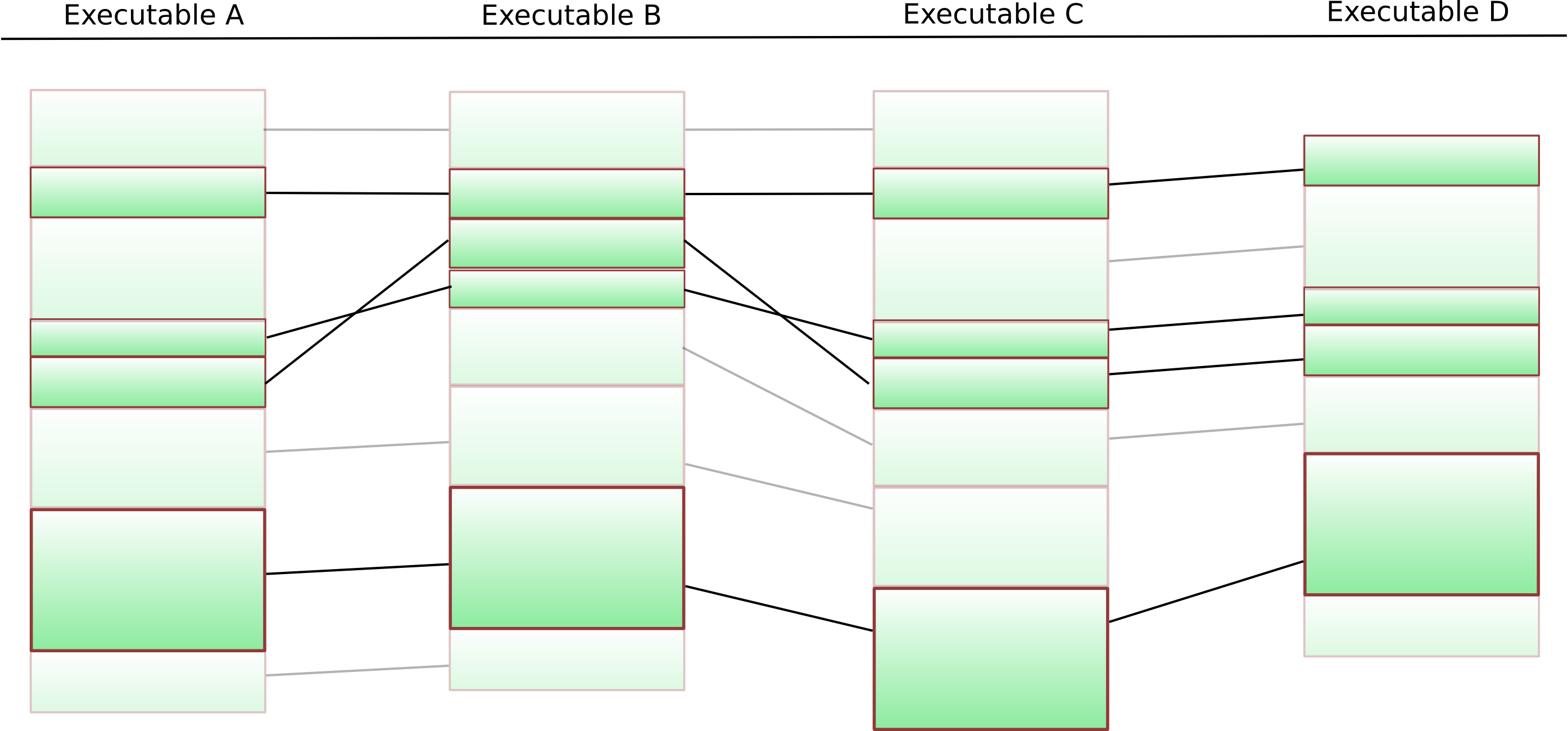

The columns show four different executables – each green block represents one particular function. The black lines are “matches” that the BinDiff algorithms have identified. The first step is now to identify functions that are present in each of the executables. This is a fairly easy thing to do, and if we remove the functions that do not occur everywhere from our diagram, we get something like this:

Now, we of course still have to remove functions that do not appear in the same order in all executables. The best way to do this is using a k-LCS algorithm.

What is a k-LCS algorithm ? LCS stands for longest common subsequence – given two sequences over the same alphabet, an LCS algorithm attempts to find the longest subsequence of both sequences. LCS calculations form the backbone of the UNIX diff command line tool. A natural extension of this problem is finding the longest common subsequence of many sequences (not just two) – and this extension is called k-LCS.

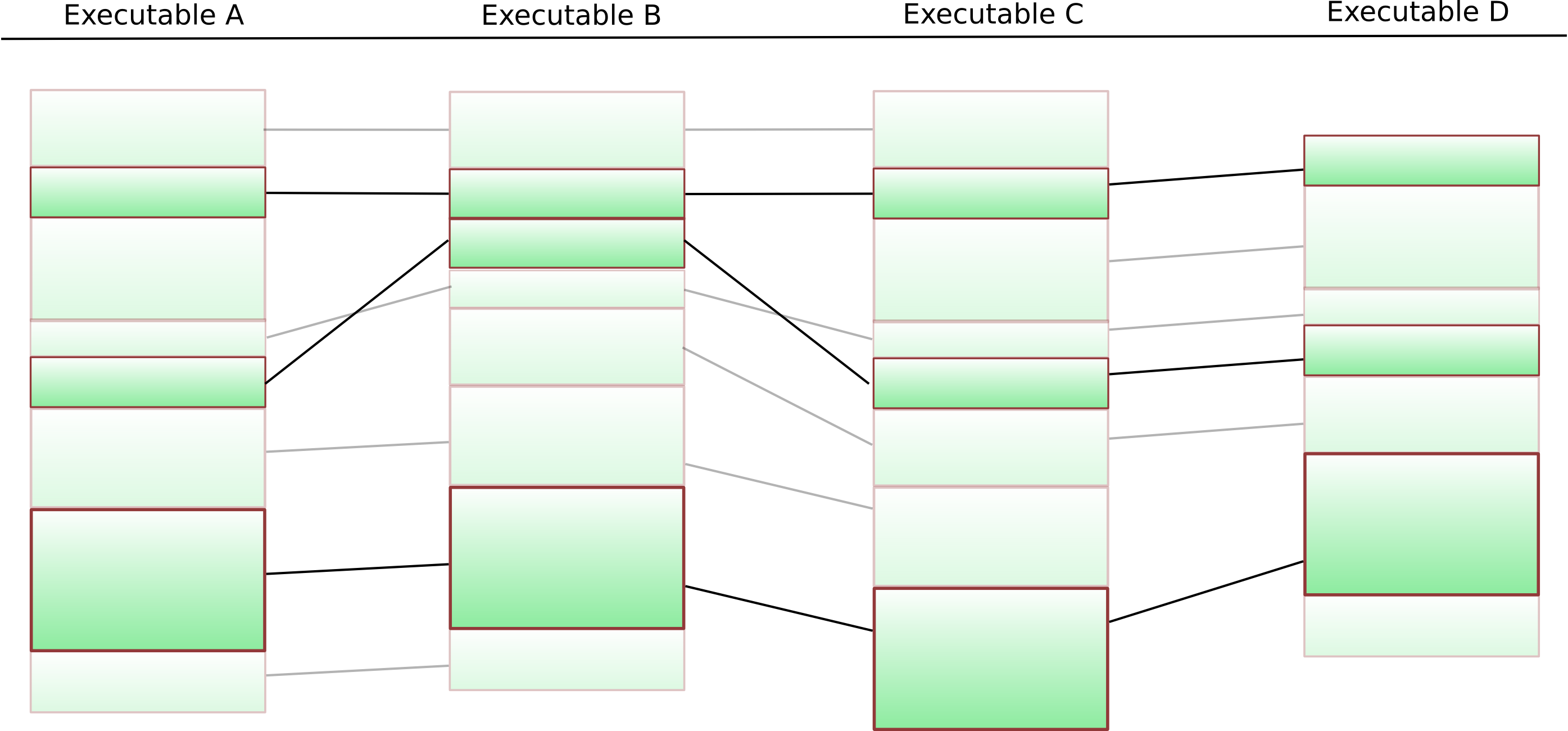

This suffers from the slight drawback that k-LCS on arbitrary sequences is NP-hard — but in our particular case, the situation is much easier: We can simply put an arbitrary ordering on the functions, and our “k-LCS on sequences” gets reduced to “k-LCS on sequences that are permutations of each other” — in which case the entire thing can be efficiently solved (check Christian’s diploma thesis for details). The final result looks like this:

Given the remaining functions, the entire process can be repeated on the basic block level. The final result of this is a list of basic blocks that are present in all executables in our cluster in the same order. We switch to a fast approximate k-LCS algorithm on the byte sequences obtained from these basic blocks. Any gaps are filled with “*”-wildcards.

The result is quite cool: VxClass can automatically cluster new malicious software into clusters of similarity – and subsequently generate a traditional AV signature from these clusters. This AV signature will, by construction, match on all members of the cluster. Furthermore it will have some predictive effect: The variable parts of the malware get whittled away as you add more executables to generate the signature from.

We have, of course, glossed over a number of subtleties here: It is possible that the signature obtained in this manner is empty. One also needs to be careful when dealing with statically linked libraries (otherwise the signature will have a large number of false positives).

So how well does this work in practice ?

We will go over a small case study now: We throw a few thousand files into our VxClass test system and run it for a bit. We then take the resulting clusters and automatically generate signatures from them. Some of the signatures can be seen here — they are in ClamAV format, and of course they work on the unpacked binaries — but any good AV engine has a halfway decent unpacker anyhow.

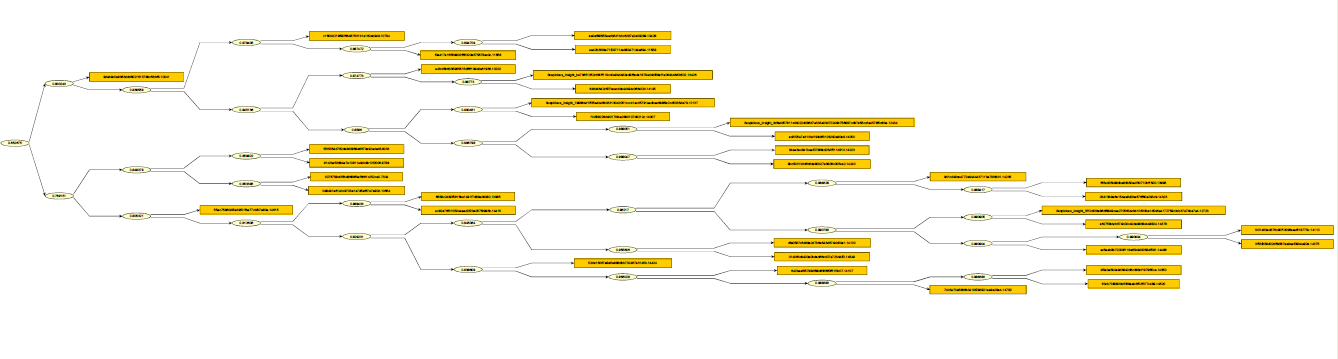

I will go through the process step-by step for one particular cluster. The cluster itself can be viewed here. A low-resolution shot of it would be the following:

So how do the detection rates for this cluster in traditional AVs look ? Well, I threw the files into VirusTotal, and created the following graphic indicating the detection rates for these files: Each row in this matrix represents an executable, and each column represents a different antivirus product (I have omitted the names). A yellow field at row 5 and column 10 means “the fifth executable in the cluster was detected by the tenth AV”, a white field at row 2 and column 1 means “the second executable in the cluster was not detected by the first AV”.

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | – | – | . | . | – | – | – | – | – | . | – | – | . | . | – | – | – | . | . | . | – | – | . | . | – | – | – | – | . | . | . | – | – | – | . |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | . | – | – | – | – | – | – |

| . | . | . | . | – | . | . | . | . | – | . | . | – | – | . | . | – | . | . | . | . | . | . | . | . | . | . | . | . | – | . | – | – | . | . | – | – | – | . | – |

| – | – | – | – | – | – | – | . | – | – | – | – | – | – | . | – | – | . | . | – | – | – | . | . | – | – | . | . | . | – | – | – | – | . | . | – | – | – | . | – |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | – | . | – | – | – | – | . | – | – | – | – | – | – | – | – | – | . | – | – | – | – | – | – |

| – | – | – | – | – | . | . | – | – | – | – | – | – | – | . | – | – | – | . | – | . | – | – | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | . |

| – | – | – | – | – | . | – | . | – | – | – | – | – | – | – | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | – | – | – | . | – | – | – | . | – | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | . | – | – | – |

| – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | . | – | – | – | . | . | . | – | – | . | – | – | – | – | – | . | – | – | – | – | – | . |

| . | – | . | . | – | . | . | . | – | – | – | – | – | – | – | – | – | . | – | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | . | – | – | . | . |

| – | – | – | – | – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | . | – | – | . |

| – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | . |

| – | – | – | – | – | . | . | . | – | – | – | – | – | – | . | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | – |

| – | – | . | – | – | . | – | . | – | – | – | – | – | – | – | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | . | – | – | . | – | . | – | – | – | – | – | – | – | – | – | – | . | . | . | . | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | – | – | – | . | – | . | – | – | – | – | – | – | – | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | . | – | . | – | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | . | |

| – | – | . | – | – | . | . | . | – | – | – | – | – | – | . | – | – | . | . | . | . | . | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | . | – |

| – | – | – | – | – | . | . | – | – | – | – | – | – | – | . | – | – | – | – | – | . | – | – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | . |

| – | – | – | – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | . | – | – | – | . | . | . | – | – | . | – | – | – | – | – | – | – | – | – | – | – | . |

| – | – | . | – | – | . | – | – | – | . | – | . | – | – | – | – | – | – | – | . | . | . | . | – | – | – | – | . | . | – | . | – | – | – | – | – | . | – | – | . |

| – | – | – | – | – | . | – | . | – | – | – | – | – | – | . | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | . | – | – | – | – | – | . | – | – | – | – | – | – | – | – | . | – | . | – | – | – | – | . | . | – | . | – | – | – | – | – | – | – | – | – |

| – | – | – | – | – | – | – | – | . | – | – | – | – | – | . | – | – | – | . | – | – | – | . | . | . | – | – | . | – | – | – | – | . | . | – | – | – | – | – | . |

| – | – | – | – | – | . | – | – | – | – | – | – | – | – | – | – | – | – | . | – | . | – | . | – | – | – | – | . | . | – | – | – | – | – | – | – | – | – | – | – |

What we can see here is the following:

- No Antivirus product detects all elements of this cluster

- Detection rates vary widely for this cluster: Some AVs detect 25 out of 32 files (78%), some … 0/32 (0%)

If we inspect the names along with the detection results (which you can do in this table), we can also see which different names are assigned by the different AVs to this malware.

So, let’s run our signature generator on this cluster of executables.

time /opt/vxclass/bin/vxsig “7304 vs 9789.BinDiff” “9789 vs 10041.BinDiff” “10041 vs 10202.BinDiff” “10202 vs 10428.BinDiff” “10428 vs 10654.BinDiff” “10654 vs 10794.BinDiff” “10794 vs 11558.BinDiff” “11558 vs 11658.BinDiff” “11658 vs 12137.BinDiff” “12137 vs 12434.BinDiff” “12434 vs 12723.BinDiff” “12723 vs 13426.BinDiff” “13426 vs 13985.BinDiff” “13985 vs 13995.BinDiff” “13995 vs 14007.BinDiff” “14007 vs 14023.BinDiff” “14023 vs 14050.BinDiff” “14050 vs 14100.BinDiff” “14100 vs 14107.BinDiff” “14107 vs 14110.BinDiff” “14110 vs 14145.BinDiff” “14145 vs 14235.BinDiff” “14235 vs 14240.BinDiff” “14240 vs 14323.BinDiff” “14323 vs 14350.BinDiff” “14350 vs 14375.BinDiff” “14375 vs 14378.BinDiff” “14378 vs 14415.BinDiff” “14415 vs 14424.BinDiff” “14424 vs 14486.BinDiff” “14486 vs 14520.BinDiff” “14520 vs 14549.BinDiff” “14549 vs 14615.BinDiff” “14615 vs 14700.BinDiff”

The entire thing takes roughly 40 seconds to run. The resulting signature can be viewed here.

So, to summarize:

- Using VxClass, we can quickly sort new malicious executables into clusters based on the amount of code they share

- Using the results from our BinDiff and some clever algorithmic trickery, we can generate “traditional” byte signatures automatically

- These signatures are guaranteed to match on all executables that were used in the construction of the signature

- The signatures have some predictive power, too: In a drastic example we generated a signature from 15 Swizzor variants that then went on to detect 929 new versions of the malware

- These are post-unpacking signatures — e.g. your scanning engine needs to do a halfways decent job at unpacking in order for these signatures to work

If you happen to work for an AV company and think this technology might be useful for you, please contact info@zynamics.com 🙂

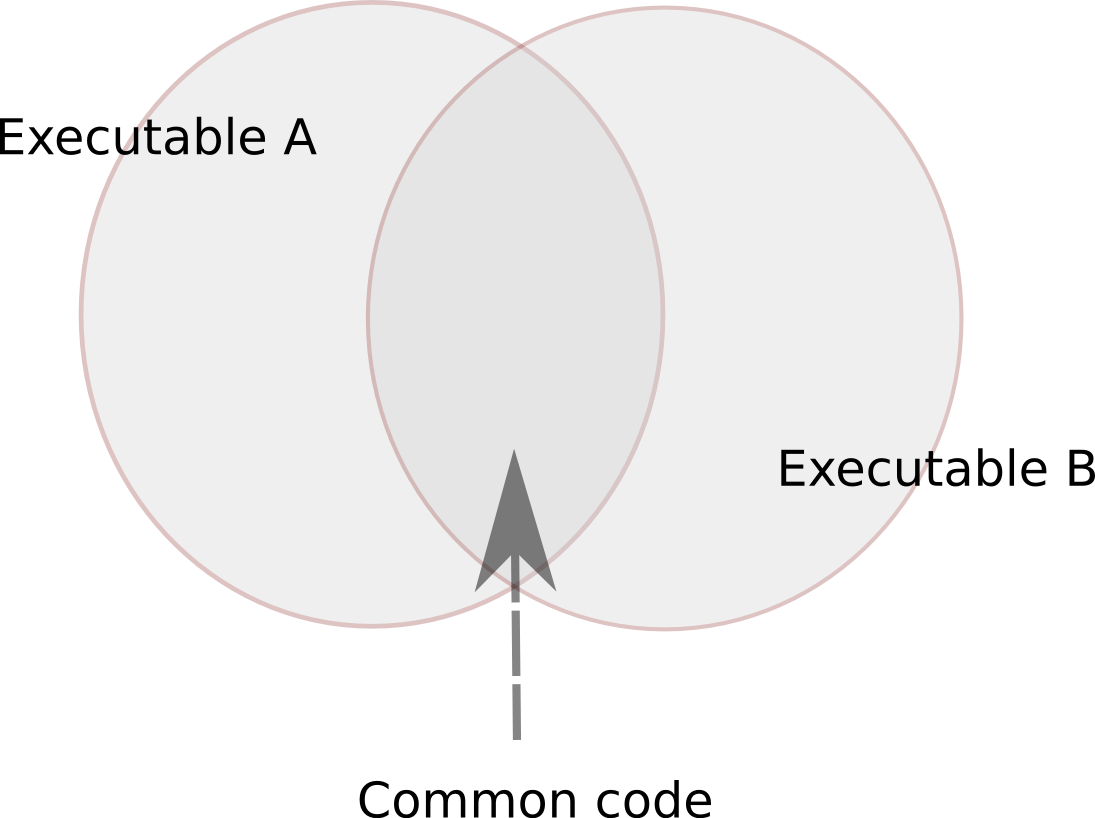

Resolving dynamic function calls with BinNavi

Sunday, February 14th, 2010One of the big problems of static code analysis are function calls with non-static call targets. These function calls can call different target functions depending on the current program state. At first they call one function and in the next moment they might call a completely different function. Popular examples of such dynamic function calls are virtual functions (like in C++) or function pointers to callback functions.

Statically finding the set of potential call targets of a dynamic function call is very difficult. While this is an area of program analysis that has seen a lot of research in the last years, the problem is undecidable in general and can become really ugly really quickly. A simpler way to resolve the call targets of dynamic function calls is to execute the target program and log where dynamic function calls are going.

In BinNavi we have implemented a way to resolve dynamic function calls within modules as well as dynamic function calls that cross module boundaries. The general idea behind our code is this:

- Figure out where the dynamic function calls are located and put breakpoints on them

- Every time such a breakpoint is hit, execute a single step and find out where the call is going

- Keep going until enough data has been collected

You can see how it all works in the 5 minutes (13 MB) flash video you can watch when you click on the image below.

Here is some more information about the process which I could not put into the video itself:

The whole Call Resolver functionality is not part of BinNavi itself but implemented as a plugin. This shows how easily users of BinNavi can extend the BinNavi GUI with new functionality and how powerful the debugging and graphing API of BinNavi is. In fact, you can download the code of the plugin here if you want to check it out yourself. This plugin was written in Java but it could have been written in Jython or JRuby as well.

Storing disassembly data in a MySQL database gives the plugin an enormous advantage: It is really, really simple to find the addresses of dynamic function calls. A single SQL query does the trick. In most other reverse engineering tools the plugin would need to go through all functions/basic blocks/instructions of the modules to find the dynamic function call instructions.

Setting breakpoints only on dynamic function call instructions brought a big speed improvement compared to just tracing the whole target program. As you can see in the video, the target program stays responsive enough to be used. This is very useful because it allows the user of the Call Resolver to control what functionality is executed and therefore what dynamic function calls are traced.

Of course the dynamic approach has downsides too. We have to have a way to execute the target program. If all we have is a non-executable memory dump of some suspicious file then we can not use dynamic function call analysis. Even if it is possible to execute the target program, it is easy to miss function calls that are never executed or function call targets that are never reached while the tracer is attached to the process. This is especially true if you have a heuristic like BinNavi has where you stop resolving function calls that “always” (really, more than 20 times) seem to go to the same target address.

So, what about you? I’d like to hear about your experiences with resolving dynamic function calls. Are you more of a fan of a static solution or a dynamic solution?

Black Hat DC "report"

Wednesday, February 10th, 2010As some of you might know I did a talk at BH DC this year about fuzzing, below the slides and the white paper. I strongly suggest you to take a look at the white paper first as the slides are full of pictures therefore not really useful from a learning point of view. If you have any questions/suggestions on the content, please feel free to write me an email or comment on this blog post.

I am not a big fan of conference reports and stuff like that but I feel like spending a few words on the attack shown by Dionysus Blazakis as I found it pretty relevant for real world exploitation scenarios. I do not want to explain again what he did – both the white paper and the slides are public- but the important facts are mainly two:

- Defeating DEP by using JITSpraying

- Defeating ASLR by exploiting a weakness in how hash maps are ordered

In Flash it is possible to combine the two by JITspraying a piece of memory, insert the function object (with the shellcode) in a dictionary/set that uses hash maps for storing data and by using (2) being able to find the address of the shellcode.

The reason why this technique is so cool is because JITSpraying does not work just on Flash, but on everything that has a JIT compiler which creates predictable output inside it, and it is not trivially fixable. As for the technique for defeating ASLR it is easier to fix(well, sort of) but still it is one of the most advanced attacks against it we have seen so far.

The bottom line: the sky isn’t falling, but if you are an exploit writer you really want to learn this technique. If you are not you should learn it anyway – I expect to see quite a lot of exploits using this technique.

[slideshare id=3127552&doc=0knowfuzz-bh-100210153540-phpapp01]

[slideshare id=3127566&doc=bh-whitepaper-100210153835-phpapp01&type=d]

staff++

Wednesday, February 3rd, 2010Hi everyone,

I am the new member on team zynamics. My name is Tim Kornau. I recently finished my Diploma Thesis at the Ruhr-University Bochum in IT-Security which covered the topic of return-oriented programming for the ARM architecture. I will post a summary of the thesis here in a follow-up blog post soon. For the impatient, you can already go ahead and read it –here-.

Primarily I will be working with Sebastian Porst on BinNavi and extending its capabilities even further. Right now I am working on the new MIPS REIL translator featured in the upcoming BinNavi 3.0 release.

If you have any questions about REIL, BinNavi, ARM, return-oriented programming or are just interested in sharing ideas about the topics, I am happy to talk to you.

I am looking forward to an awesome time at zynamics and a lot of new things to learn and do.

Automated signature generation for malware (teaser & help needed)

Tuesday, February 2nd, 2010Hey all,

I promised a while ago on my personal blog that I would write about the work that has been done here at zynamics regarding the automated extraction of malware signatures. Full details are coming up in the next two to three weeks, but before that, I’d like to ask you, dear reader, for a favour:

We have a number of automatically generated ClamAV signatures here, and while we can test them for false positives locally, our “goodware”-zoo is clearly limited. We would much appreciate if you could take these autogenerated signatures and try to see whether they match on any program that is “goodware”, e.g. known to not be malware.

You can use the above file by simply running “clamscan -d ./auto.generated.sigs.ndb”

Personally, I am really curious to see if any of the signatures end up creating false positives…

Cheers,

Halvar/Thomas

From disassembly to isolating important functions in less than four minutes

Monday, February 1st, 2010My earlier blog post about the improved Differential Debugging feature of BinNavi 3.0 generated a lot of interest so I have decided to write a follow-up post. Unlike last time I want you to be able to see what BinNavi can do and not just read about it. I have therefore created a short Flash video that shows how to find important code in disassembled files using the BinNavi debugger and its trace mode which is the core of Differential Debugging.

In the video I start with a disassembled IDB file of Pidgin’s liboscar.dll. The first step is to import the data from the IDB file into a BinNavi MySQL database. Afterwards I open the call graph of liboscar.dll and put the BinNavi Win32 debugger into function trace mode. In this mode trace events are generated every time a function of liboscar.dll is executed. This allows me to find the functions responsible for sending messages in just a few seconds.

You can find the video here. (5 MB Flash video with a resolution of 1280 x 1024)

Now this video shows only the most primitive use case of Differential Debugging. Nevertheless, this use case is already incredibly powerful. Finding out what code is responsible for what functionality of a program in just a few seconds is incredibly useful, no matter what you are trying to do.

However, there are situations where this simple use case is not enough. Maybe you are analyzing a daemon process where you can’t just click on some GUI element to isolate events. For these situations we provide more advanced features, like the ability to compare and connect recorded traces using set operations I mentioned in my earlier post.